Get the latest AI, data, and technology insights from Matatika’s experts direct to your inbox.

-

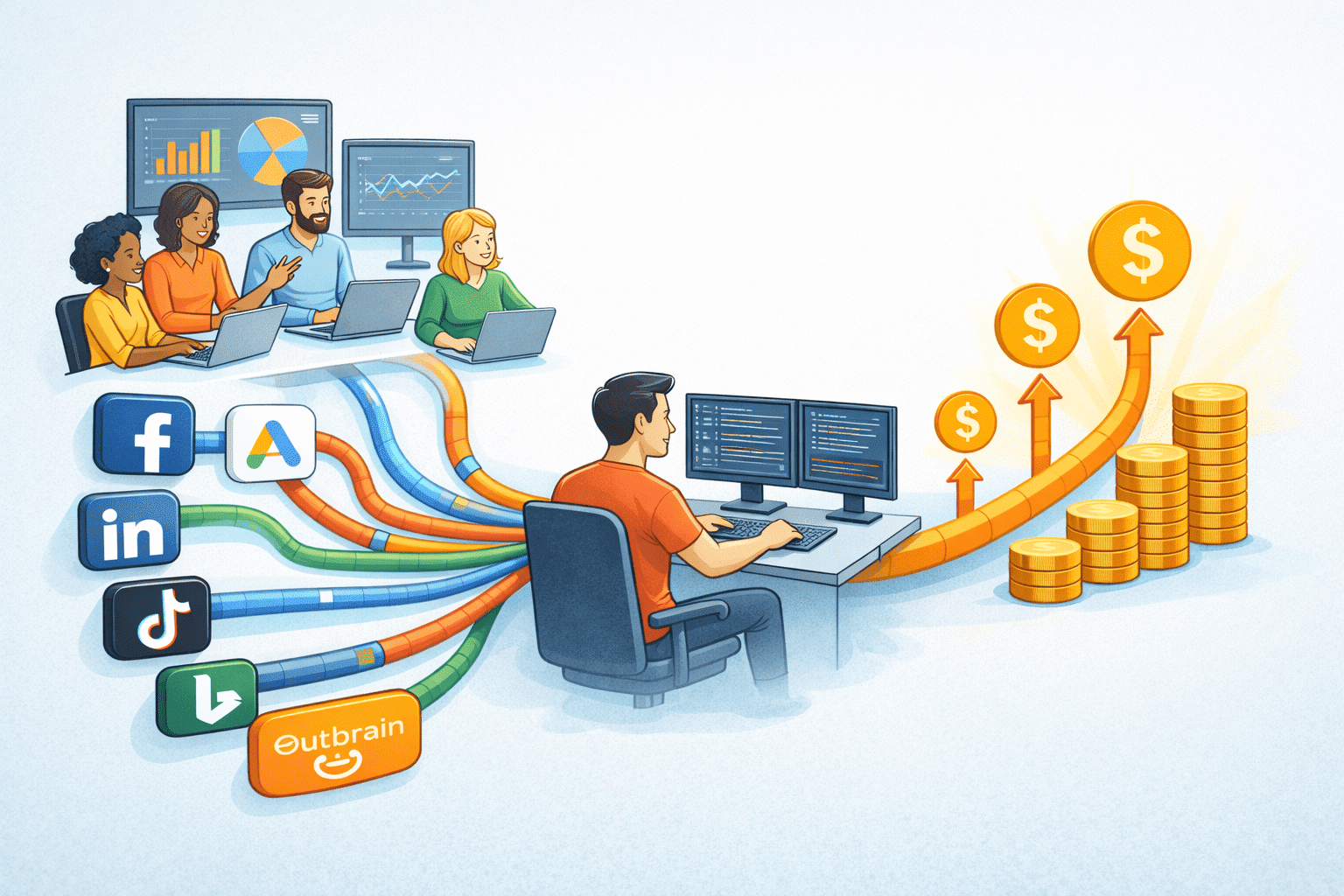

Why marketing data connectors quietly inflate your ETL costs

And how MVF consolidated paid media data without losing insight Marketing data rarely breaks loudly. It degrades quietly. Pipelines keep running. Dashboards still load. Spend gets approved. But somewhere between your tenth and thirtieth connector, the economics stop making sense. Teams often assume marketing connectors are cheap because each one looks small in isolation. A Google Ads connector here. A LinkedIn Ads connector there. Another for TikTok. Another for reporting exports. Each one feels justified. Together, they quietly become the most expensive part of the data stack. MVF learned this the hard way.

Read the article -

🔔 Celebrating a Most Eventful Matatika Year! 🔔

The Matatika Year in Review: On the Twelfth Day of Data... As the year wraps up, we’re taking a lighthearted, musical look back at the incredible journey we’ve shared! Thanks to the energy and support of our amazing community, 2025 has been an absolutely unforgettable year of growth, connection, and major milestones. Grab a hot drink, and join us as we sing the praises of the Matatika year that was!

Read the article -

Building Data Platforms That Actually Solve Business Problems

Meet Teddy Bernays Teddy is a highly autonomous Freelance Data Engineer and Google Cloud Trainer who focuses on helping startups and mid-sized companies build efficient, scalable, and cost-effective data platforms. He started his career in the complex world of audio engineering before transitioning to IT, where he found a fascination with the mechanics "under the hood" of data systems. Today, he is a firm believer that the solution to data inconsistency isn't always more code, but more clarity. His approach is simple: “If it’s simple, do it simple. You don't need three different tools to solve a one-tool problem.”

Read the article -

Looking for Arch?

Arch has officially shut down, and the Arch platform is no longer operating. If you’re an Arch customer looking for what’s next, you’re in the right place. Matatika is now supporting former Arch customers and helping teams continue their analytics and data workflows without disruption.

Read the article -

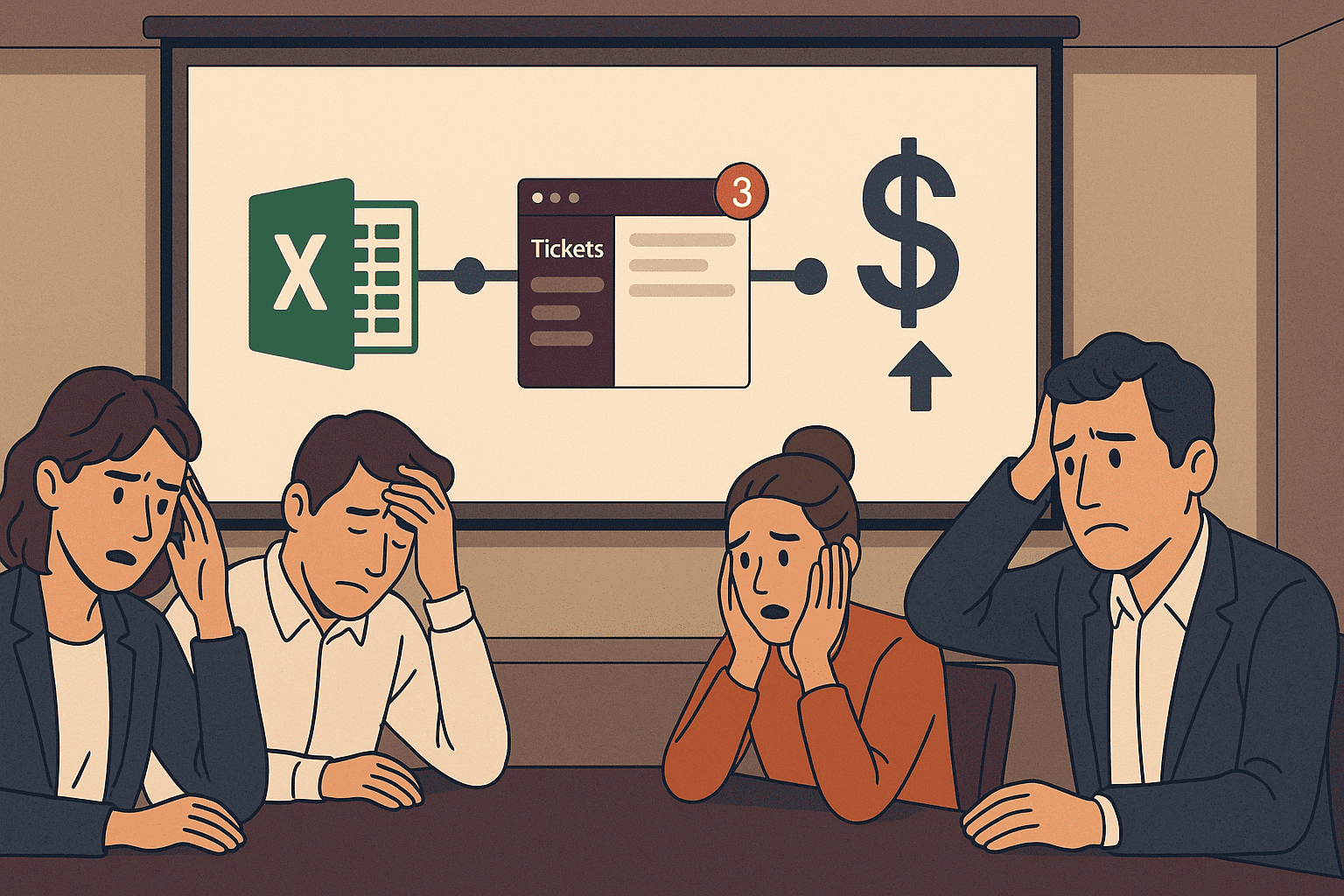

Google Sheets for Analysts: How to Eliminate the Data Update Bottleneck

The Real Cost of the Bottleneck Engineering time disappears into trivial updates. Every data change request is a context switch. Even five-minute tasks fragment your day. You never get into deep focus because you're constantly interrupted by "quick questions" that aren't actually quick.

Read the article

Data Leaders Digest

Stay up to date with the latest news and insights for data leaders.